Tonal: Multimodal ux research and design

UX Designer · Aug '23 – Oct '25 · Team: 2 UX Designers, 1 Project Manager

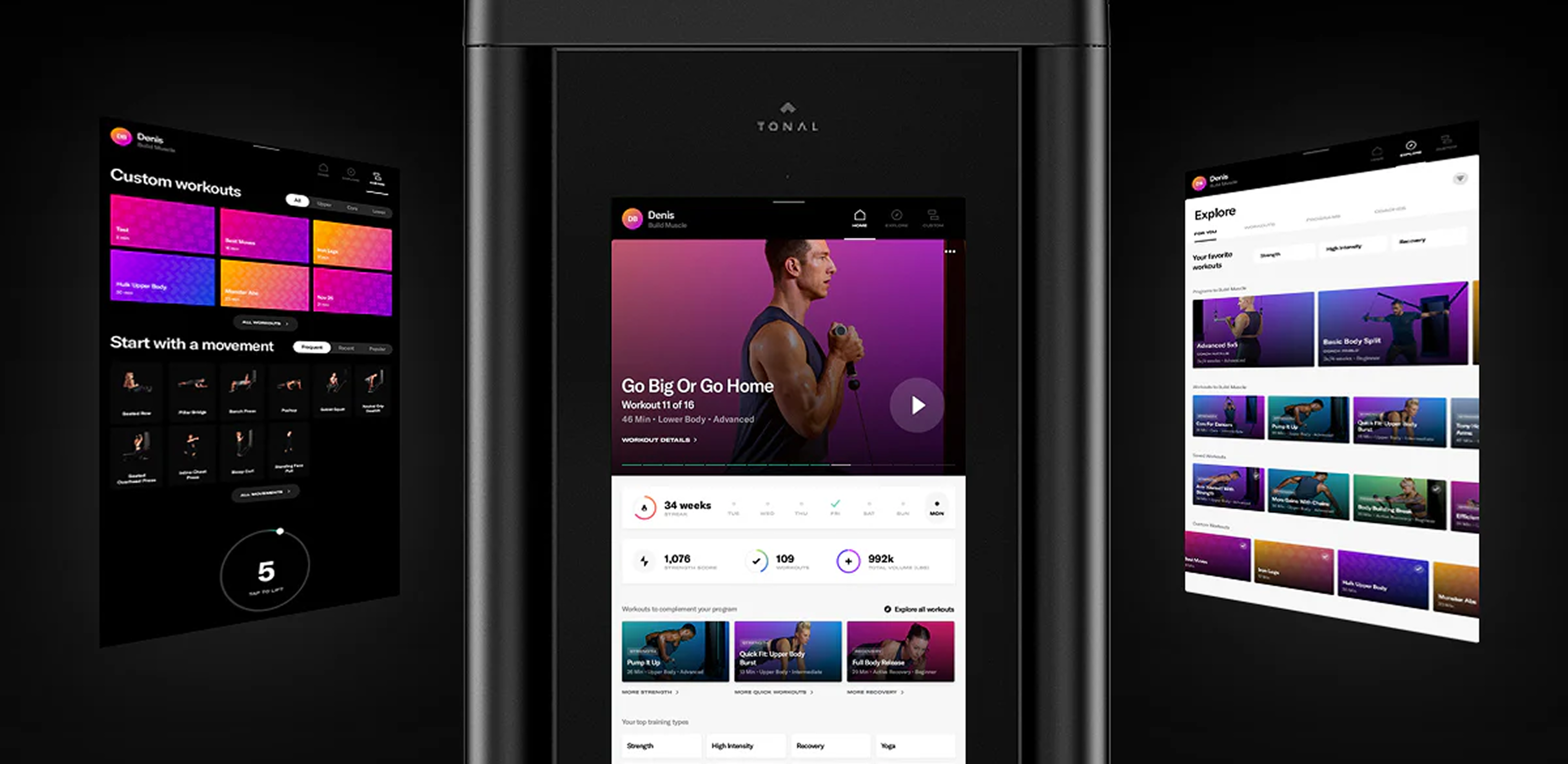

I led a multimodal UX design project for Tonal's smart home gym to understand why first-time users hesitated during key interaction points. The goal was to move beyond self-reported feedback and identify friction through behavioral and physiological signals.

Tonal's product team was preparing a roadmap update and needed evidence grounded in real user behavior. The project focused on uncovering hidden cognitive load across hardware setup and software navigation.

Interface designs and prototypes from this project are omitted due to NDA restrictions.

Research process

The work followed a structured end-to-end sequence: Participant Recruitment → Setup & Orientation → Usability Sessions → Observation & Annotation → Data Analysis → UI and Cognitive Mapping → Interface Design.

We recruited 24 participants that matched to Tonal's target demographic; fitness-conscious, tech-comfortable adults with beginner to intermediate strength training experience.

Usability testing

Sessions ran across two environments — a Nordstrom retail floor for authentic first-impression behavior in a real purchase context, and an ASU lab for controlled conditions with consistent lighting and a quiet space for think-aloud and eye-tracking accuracy.

Behavioral patterns and friction points were consistent across both environments, validating the findings regardless of context.

Usability Testing: Nordstorm Retail

Usability Testing: ASU Laboratory

Data collection

We combined eye tracking, electrodermal activity, and think aloud to capture behavioral and physiological signals during interaction.

The technical constraints were significant. Tonal's large display and user movement during workouts made eye-tracking complex, while sudden resistance changes required a clear method to separate exertion signals from cognitive stress.

Multimodal data collection

Data collection: session recording

Timeline extension

Each 60 to 90 minute session generated hundreds of fixation events and continuous EDA waveforms requiring qualitative coding. Across 24 participants, this resulted in 1500 plus minutes of manual annotation across synchronized multimodal streams.

I realized the initial timeline underestimated the coding effort and requested an extension to preserve data quality. This required alignment with stakeholders and early communication of tradeoffs, shifting focus from speed of delivery to accuracy of pattern extraction.

Data analysis

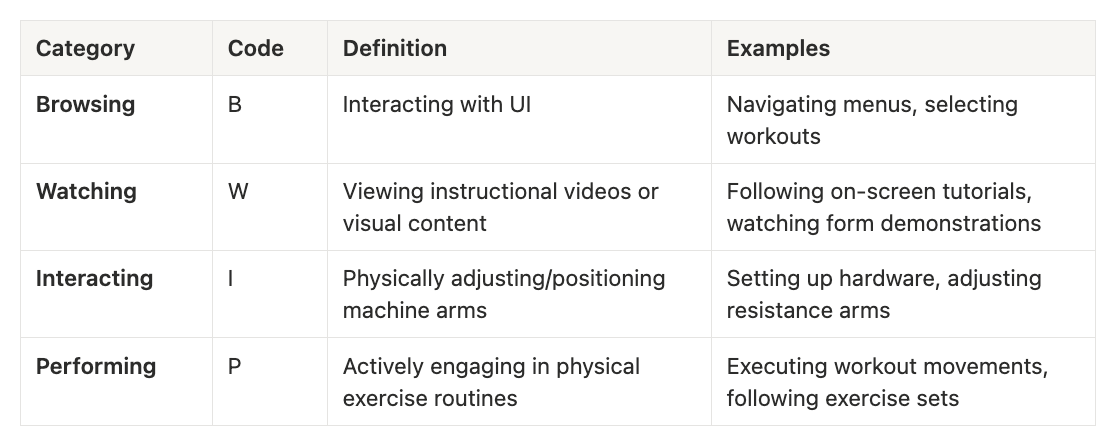

I built a four-category annotation taxonomy to code every user action consistently across all participants, turning individual observations into aggregatable patterns.

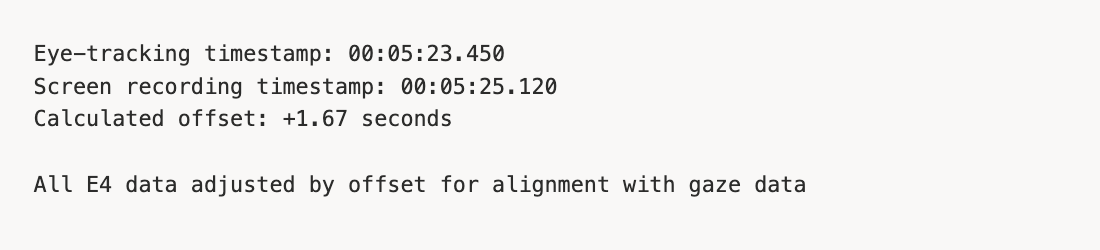

The three data streams used different timestamp formats and sampling rates, so synchronization was manual, marking shared behavioral events across recordings, calculating offsets, and merging into a unified timeline per participant.

Illustrative timestamps for methodology demonstration

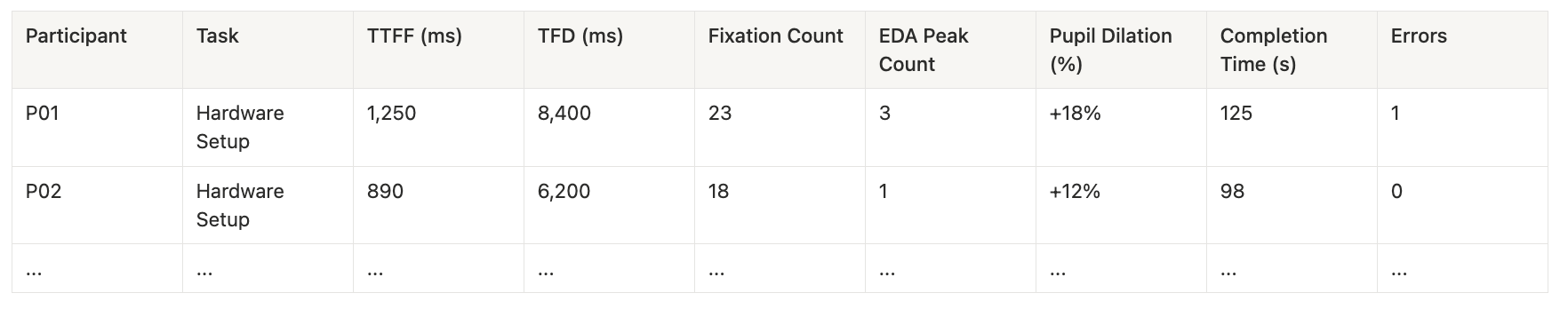

After syncing individual datasets, we consolidated everything into a master spreadsheet to extract cross participant insights and identify interaction patterns.

Example structure (note: data shown is illustrative for methodology demonstration only).

Validated findings

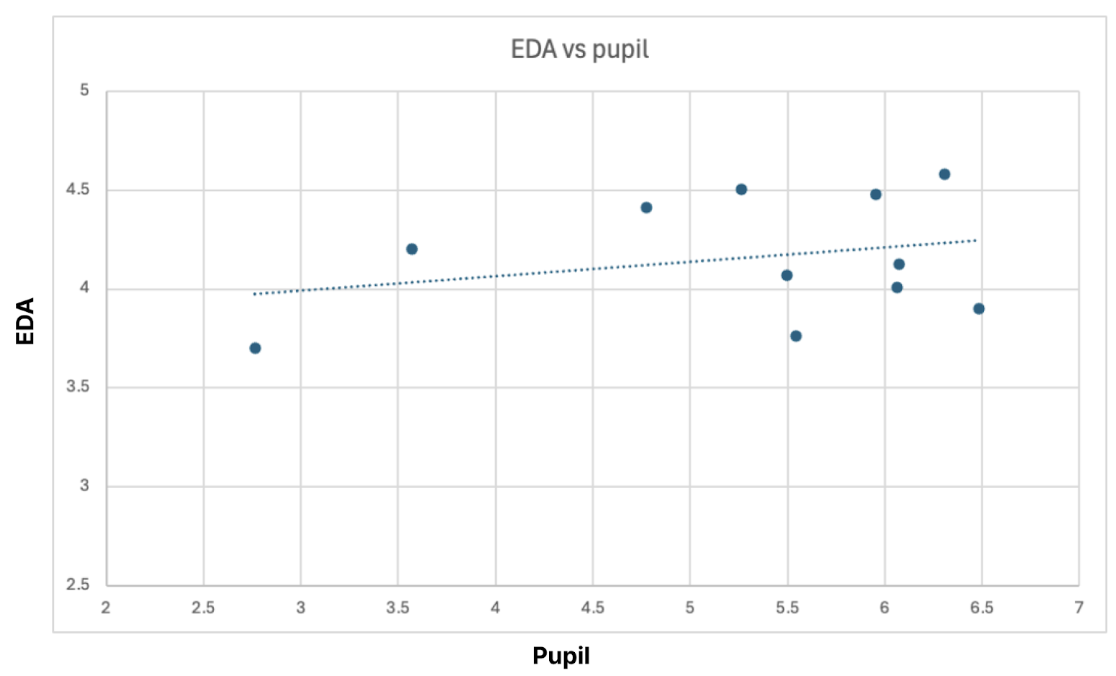

Through correlation analysis, we validated that when pupil dilation and EDA increased together at the same interaction point, it reliably indicated cognitive stress rather than physical exertion. This provided a consistent signal for identifying hidden usability friction beyond self-reported feedback.

Interface friction

I created flowcharts mapping every click and transition through Explore Workout and Custom Workout Creation flows. By mapping physiological responses to each interaction point, we identified friction across four specific areas: onboarding, navigation, custom workout creation, and in-rep feedback.

What initially appeared as isolated usability issues clustered around transitions between user intent and system response. This reframed friction from UI complexity to feedback timing and expectation mismatch.

Impact

The findings reshaped how the team interpreted first time user hesitation in Tonal's onboarding experience. They shifted the focus from interface complexity to timing and clarity of system feedback during key interactions.

Our recommendations were incorporated into Tonal's upcoming onboarding and navigation updates. The project also established a repeatable multimodal research framework for evaluating future interface changes.

Specific data, findings, and prototypes are omitted due to NDA. This case study focuses on research methodology and process.